Setup Guide · RAY-L v0.6

Get RAY-L

running in Blender.

RAY-L connects Blender with Stable Diffusion and Flux.1 dev via ComfyUI – everything runs locally on your machine. No cloud, no subscription, no vendor lock-in – all components are open source. This guide walks you through the complete setup from scratch.

Requirements

Your homebase. You build and light your scene here — composition, camera, materials, geometry. Everything that defines where things are and how light hits them. RAY-L lives inside Blender as a panel in the Render Properties (the camera icon in the Properties Editor).

An installer and launcher for local AI tools. Getting Stable Diffusion and ComfyUI running on macOS without it means dealing with Python environments, dependency conflicts, and a lot of Terminal troubleshooting — not a fun afternoon. Stability Matrix handles all of that: it installs ComfyUI with a single click, manages model folders, and keeps everything up to date. During daily work you don't interact with it directly — you just launch ComfyUI from it and let it run in the background.

The local engine that runs Stable Diffusion on your machine. It receives the render from Blender, processes it through the AI pipeline, and sends the result back. RAY-L communicates with ComfyUI via a local API at 127.0.0.1:8188 — no cloud, no data leaving your machine.

The image generation model — the actual AI brain. RAY-L supports two models: Juggernaut XL Ragnarok for SDXL (6.6 GB, works on 16 GB RAM) and Flux.1 dev for higher quality output (23.8 GB, requires 32 GB+ RAM). Both specialize in photorealistic results. You switch between them directly in the RAY-L panel.

An optional but powerful upgrade to Juggernaut XL. Flux.1 dev is a newer generation model that produces higher quality photorealistic output and understands natural language prompts — no keyword lists required. Requires at least 32 GB unified memory. RAY-L lets you switch between SDXL and Flux directly in the panel.

This is what makes RAY-L fundamentally different from just prompting an AI. Without ControlNet, Stable Diffusion or Flux ignores your Blender geometry and generates whatever it wants. ControlNet extracts the exact edge structure from your render using a Canny algorithm — every wall, corner, and silhouette — and forces the AI to stay within that structure while generating new materials and atmosphere. Your composition stays exactly as you defined it in Blender. Only the surface quality changes.

The connector. RAY-L sits in Blender's Render Properties and handles everything between the two worlds: it reads the Render Result buffer, runs the Canny edge extraction locally via OpenCV, builds the ComfyUI workflow, sends both the render and the edge map, and displays the result back in the Blender Image Editor. You never leave Blender.

System

macOS · Apple Silicon

M1 / M2 / M3 / M4 — 16 GB (SDXL) / 32 GB+ (Flux)

Disk space

~12 GB (SDXL) / ~40 GB (Flux)

ComfyUI + models

Checkpoint model

Juggernaut XL Ragnarok

~6.6 GB · SDXL · 16 GB RAM

or

Flux.1 dev

~23.8 GB · 32 GB+ RAM

ControlNet SDXL

controlnet-canny-sdxl

~2.3 GB · safetensors

ControlNet Flux

flux-canny-instantx

~3.2 GB · safetensors

Performance note

On a MacBook Air with 16 GB, expect 15–30 minutes per render at 1024×1024 with SDXL. For quick feedback during setup, work at 512×512 or 768×768. A Mac Studio with 64 GB reduces render times significantly.

Install Blender 5.0

Download Blender 5.0

Go to blender.org/download and download version 5.0 for macOS Apple Silicon. This is the only version RAY-L v0.5 has been tested with.

Move to Applications

Drag Blender.app into your /Applications folder. RAY-L expects Blender at this path for Python integration to work correctly.

Install OpenCV in Blender's Python

RAY-L uses OpenCV to extract Canny edges locally — without a separate ComfyUI preprocessor node. Open Terminal and run:

If the install succeeds you'll see a confirmation line ending in Successfully installed opencv-python-headless.

Verify the installation

In the same Terminal window, run a quick check:

Should print something like 4.13.0. If it does, you're good.

Install ComfyUI via Stability Matrix

Stability Matrix is the easiest way to install and manage ComfyUI on macOS. It handles dependencies, model folders and updates — no manual Python environment setup required.

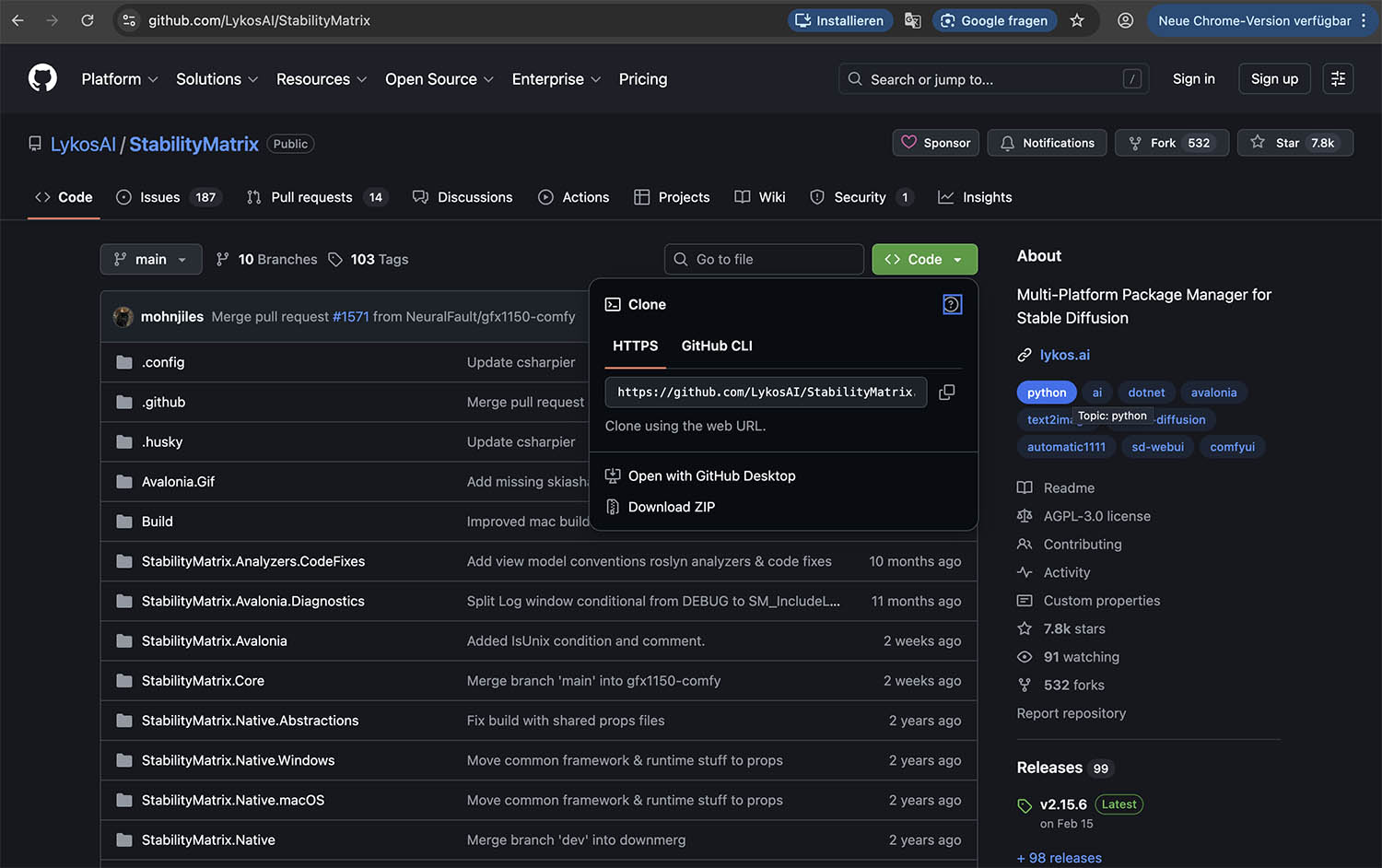

Download Stability Matrix

Go to github.com/LykosAI/StabilityMatrix and download the latest macOS release. Open the DMG and move the app to your Applications folder.

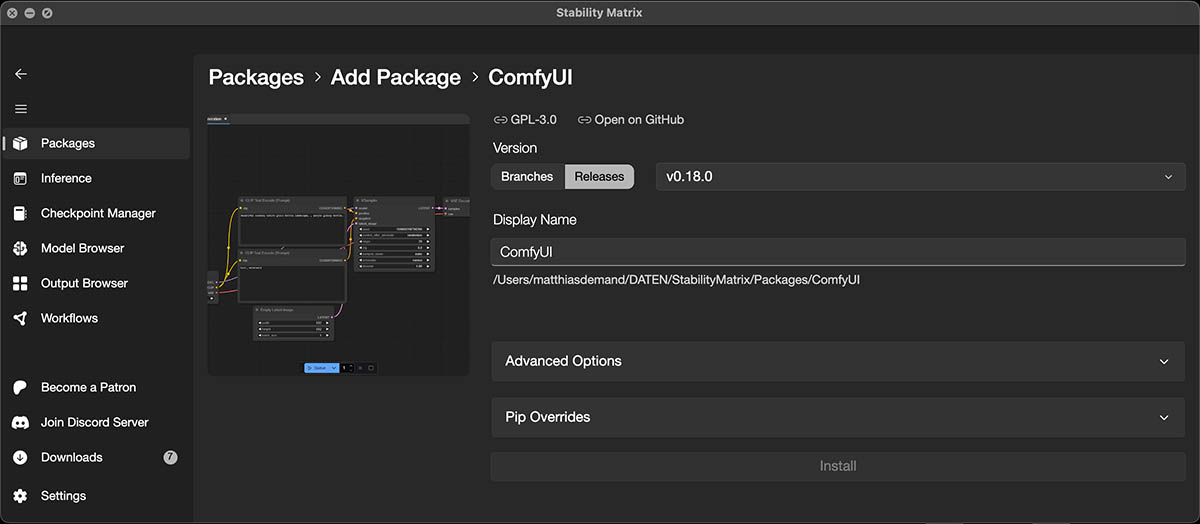

Install ComfyUI as a package

Launch Stability Matrix. On first run it will ask where to store data — choose a location with enough disk space (at least 15 GB free, ideally 40 GB+). Then click Add Package → ComfyUI → Install.

Important — skip the model selection

Stability Matrix may offer to download a starter model during installation. Skip this step and click Close. We will download the models manually in the next section so we know exactly where they are placed.

Start ComfyUI and verify it's running

Click Launch in Stability Matrix. After a moment, ComfyUI's web interface opens in your browser at 127.0.0.1:8188. Leave this running whenever you use RAY-L — it must be active in the background.

Note the model folder path

Stability Matrix stores everything in the folder you chose during setup. The exact path depends on where you installed it — for example ~/Documents/StabilityMatrix/ or a custom location. Inside that folder, ComfyUI's model directory is at:

Note this path — you will need it in the next step to place the model files in the correct subfolders.

Download Models — SDXL

Two models are required for SDXL. For Flux.1 dev, additional models are needed — see section 07. Place all files in the correct subfolders inside your ComfyUI models directory.

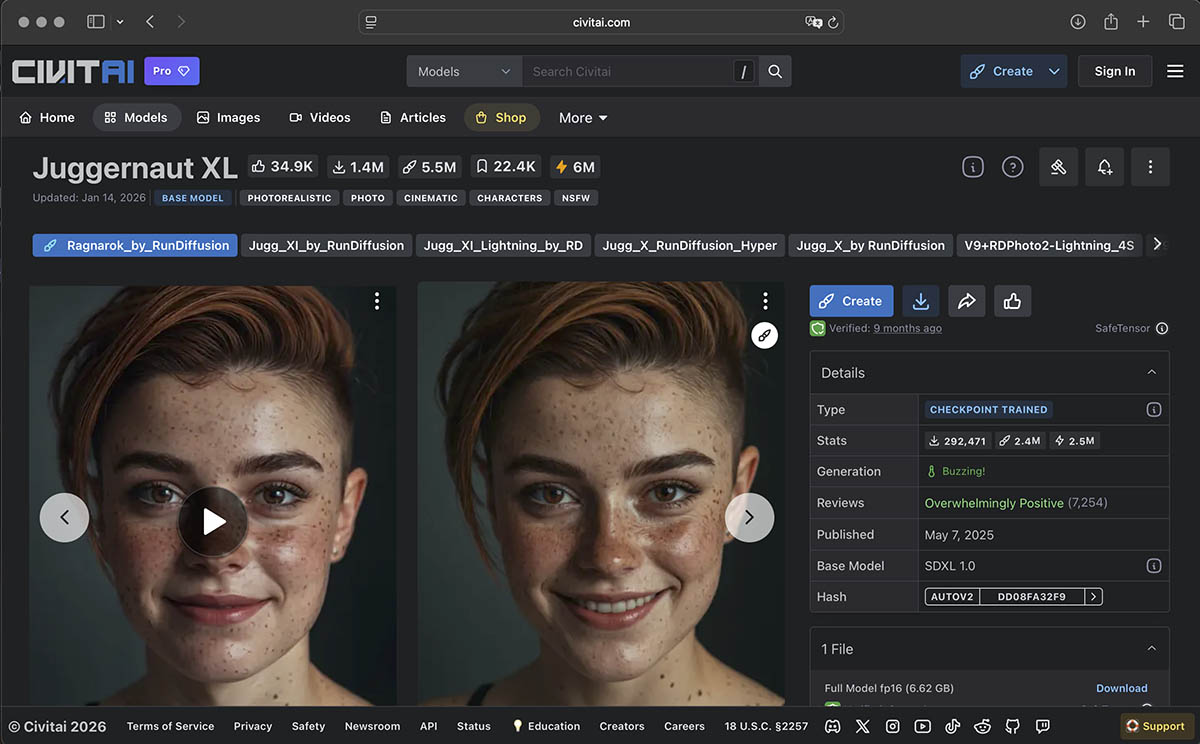

Checkpoint — Juggernaut XL Ragnarok

This is the image generation model — the brain behind the photorealistic output. Download juggernautXL_ragnarokBy.safetensors (~6.6 GB) from Civitai and place it in:

ControlNet — Canny SDXL

This model locks Stable Diffusion to your Blender geometry — it is what makes RAY-L work. Without it, Stable Diffusion ignores your geometry and generates freely.

How to download from Hugging Face:

① Go to huggingface.co/xinsir/controlnet-canny-sdxl-1.0

② Click the "Files and versions" tab at the top of the page

③ You will see two versions (V1 and V2) — download V1

④ Click the download arrow on the right side of the row for diffusion_pytorch_model.safetensors

Rename the file after download

The file downloads as diffusion_pytorch_model.safetensors. You must rename it to controlnet-canny-sdxl.safetensors before placing it in the models folder — otherwise RAY-L cannot find it. In Finder: click the file once, press Return, type the new name, press Return again.

Place the renamed file in:

If the controlnet subfolder does not exist, create it in the Finder.

Restart ComfyUI

After placing both files, restart ComfyUI from Stability Matrix so the models are recognized. You can verify they appear in ComfyUI's model dropdown when you open the web interface at 127.0.0.1:8188.

Install the RAY-L Add-on

Download RAY-L

Download the RAY-L_v06.zip from this link. Don't unzip it — Blender installs directly from the ZIP file.

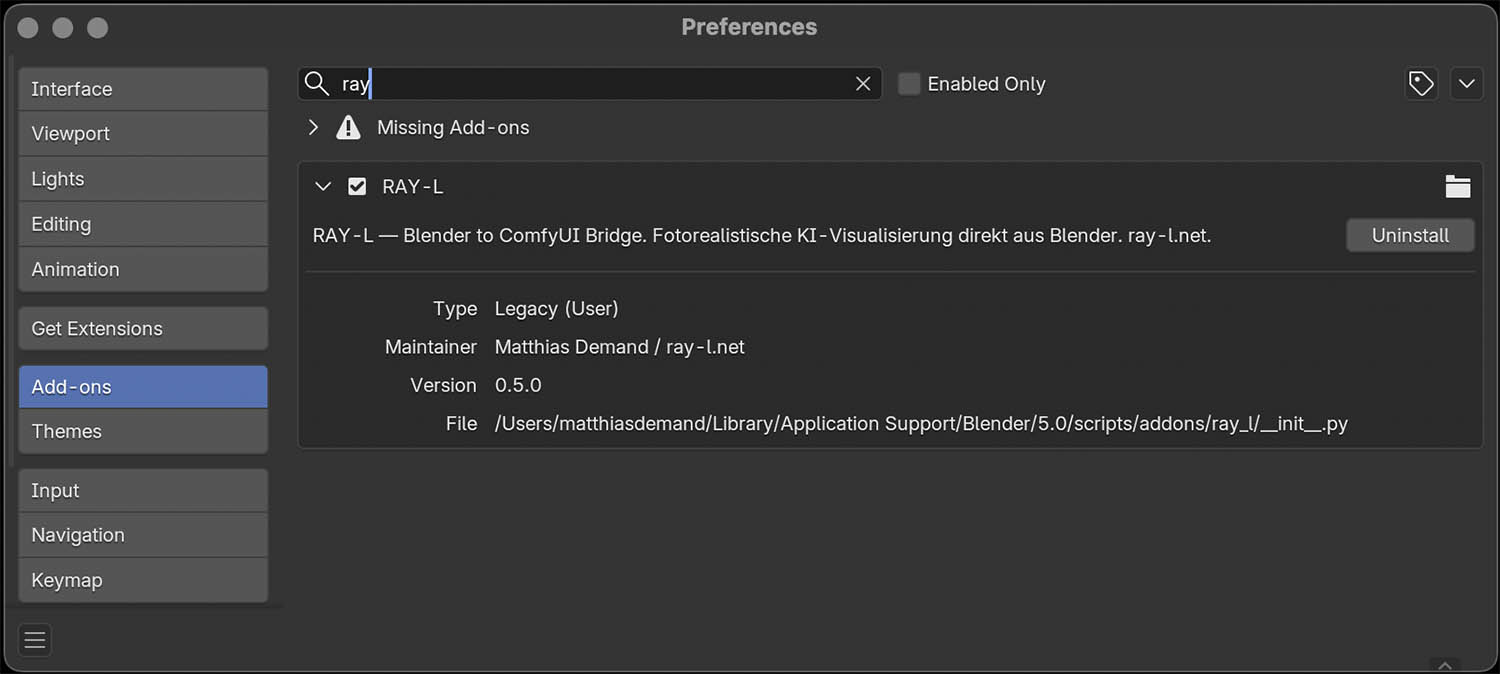

Install in Blender

Open Blender → Edit → Preferences → Add-ons → Install. Navigate to the downloaded ZIP and confirm. The add-on RAY-L: ComfyUI Bridge will appear in the list.

Enable the add-on

Check the checkbox next to RAY-L: ComfyUI Bridge to activate it. The RAY-L panel will now appear in Blender's Render Properties — the camera icon in the Properties Editor on the right side.

Verify the connection

Make sure ComfyUI is running. In the RAY-L panel, the status line should show ComfyUI connected at 127.0.0.1:8188. If it shows a connection error, check that ComfyUI is active in Stability Matrix.

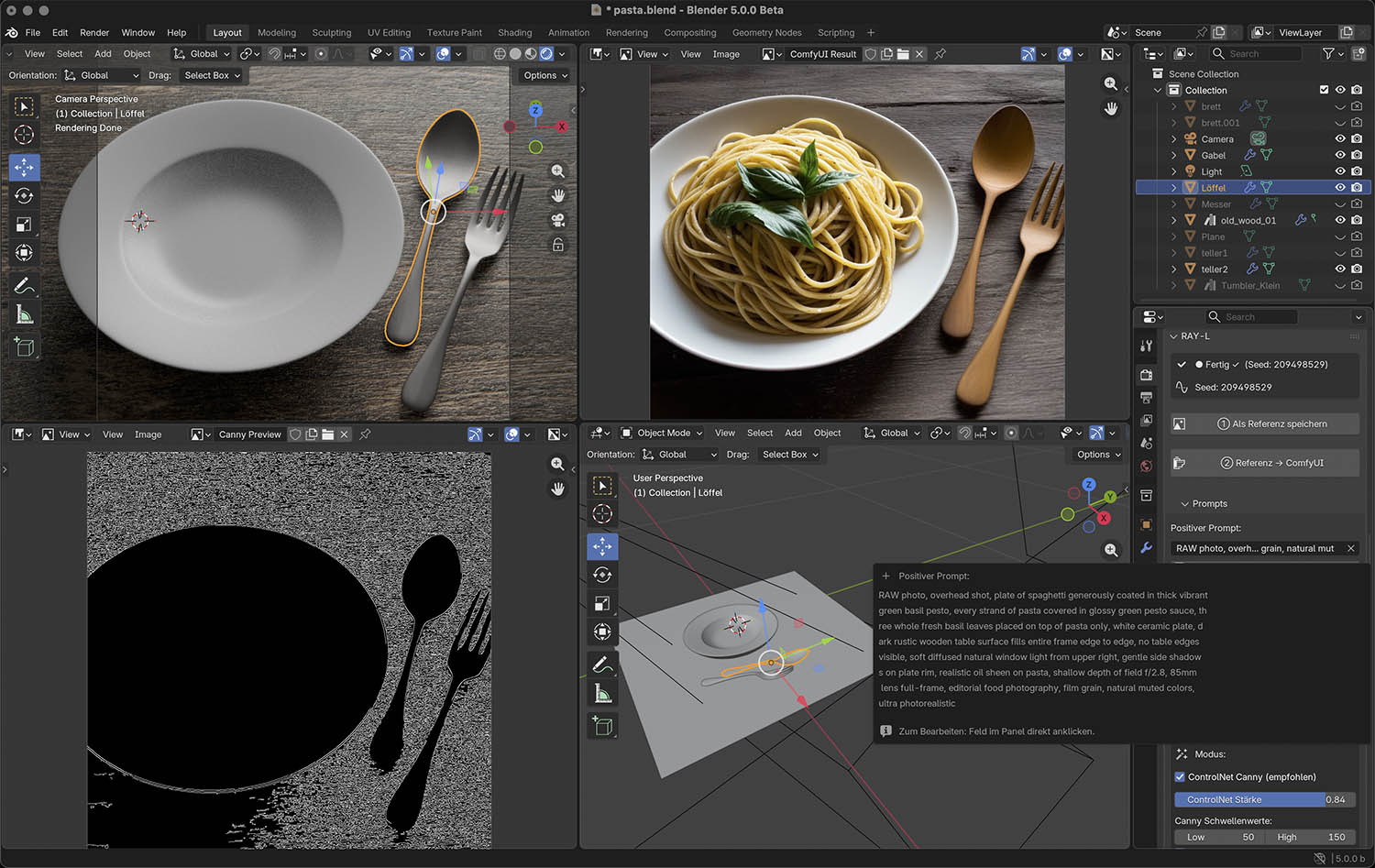

Your First Render

The daily workflow is four steps. Always follow this exact order.

Render your scene — F12

Press F12 to render with Cycles or EEVEE. Wait until the render is complete before moving to the next step. For a first test, keep resolution at 512×512 to keep waiting time short.

Save as Reference — Button ①

In the RAY-L panel, click ① Als Referenz speichern. This saves the current Render Result buffer as input for ComfyUI. Do this immediately after F12 — the buffer is overwritten once ComfyUI returns a result.

Configure Canny edge detection

In the RAY-L panel under Settings → ControlNet Canny, set the edge detection thresholds. The defaults work well for most scenes:

For clean architectural geometry, keep the defaults. If your render has fine surface detail (wood grain, fabric), raise Low to 80–100 to suppress noise. If important edges are missing, lower High to 100–120.

Optional: check the Canny preview

Click Canny Vorschau to see the edge map that will be sent as ControlNet input. Edges should be clean and clearly defined. Heavy noise means the render has too much texture detail at low resolution — try a slightly higher resolution.

Send to ComfyUI — Button ②

Click ② Referenz → ComfyUI. RAY-L sends the render and Canny edge map to ComfyUI, which runs Stable Diffusion with ControlNet. The result appears automatically in the Blender Image Editor when done.

Important — Step order

Always press F12 first, then immediately save as Reference (①). If you click Send (②) before saving, you'll be sending an old reference image — or none at all. The Render Result buffer is overwritten after each ComfyUI pass.

Key Settings Explained

These are the parameters you'll adjust most often. Start with the defaults, then tune from there.

| Parameter | Default | What it does |

|---|---|---|

| ControlNet Strength | 0.85 – 0.95 | How strongly Blender geometry controls the result. 1.0 = exact geometry, 0.5 = loose suggestion. Start at 0.90. |

| Canny Low | 50 | Lower threshold for edge detection. Lower = more edges captured. Keep at 50 for most scenes. |

| Canny High | 150 | Upper threshold. Higher = only main structural edges. Raise if the edge map looks noisy. |

| Steps | 20 – 25 | More steps = better quality, longer render time. Use 10 steps for fast tests during setup. |

| CFG Scale | 6.0 – 7.0 | How strictly the prompt is followed. Above 10 often looks artificial. 7.0 is a reliable starting point. |

| Seed | Random | Random seed = different result each time. Fix a seed to reproduce a good result — read it from the status bar after a successful render. |

| Output size | From Blender | Automatically taken from Render Properties. Keep at 512×512 or 768×768 on MacBook Air for practical render times. |

Prompt structure that works well for photorealistic architecture:

Sampler

The tested and recommended sampler is Euler Ancestral with 25 steps and CFG 7.0. This combination is what the Juggernaut XL Ragnarok model was optimized for.

Flux.1 dev — Optional Upgrade

Flux.1 dev is a newer, more capable model than Juggernaut XL. It produces higher quality photorealistic results and understands natural language prompts better — no keyword lists required. RAY-L supports both models and lets you switch between them in the panel.

Hardware requirement

Flux.1 dev requires at least 32 GB of unified memory for practical use. On a MacBook Air with 16 GB, Flux will fail with an out-of-memory error. A Mac Studio or Mac Pro with 64 GB is recommended.

~23.8 GB · Place in models/unet/ · Requires Hugging Face account and license agreement

~9.6 GB · Place in models/text_encoders/ · fp16 recommended for Apple Silicon

~235 MB · Place in models/clip/

~335 MB · Place in models/vae/ · Requires Hugging Face login

~3.2 GB · Place in models/controlnet/ · Rename after download

Create a Hugging Face account and accept the Flux license

Go to huggingface.co and create a free account. Then visit huggingface.co/black-forest-labs/FLUX.1-dev and click "Agree and access repository" to accept the license terms. Without this, the download will be blocked.

After accepting, go to huggingface.co/settings/tokens, create a new token with Read access, and keep it ready for the download step.

Token security

Never share your Hugging Face token publicly. After downloading, delete or regenerate the token at huggingface.co/settings/tokens.

Download Flux.1 dev — the main model (~23.8 GB)

Go to huggingface.co/black-forest-labs/FLUX.1-dev → Files and versions → download flux1-dev.safetensors. Place it in:

This download takes 1–3 hours depending on your connection speed.

Download T5 Text Encoder and CLIP (~9.8 GB total)

Go to huggingface.co/comfyanonymous/flux_text_encoders → Files and versions and download both files:

① t5xxl_fp16.safetensors → place in models/text_encoders/

② clip_l.safetensors → place in models/clip/

Why fp16 and not fp8?

The fp8 version does not run on Apple Silicon MPS. Always use fp16 on Mac — it is fully compatible and delivers full quality.

Download Flux VAE (~335 MB)

Go to huggingface.co/black-forest-labs/FLUX.1-dev → Files and versions → download ae.safetensors. Place it in:

This file requires Hugging Face login — make sure you are signed in.

Download Flux ControlNet Canny (~3.2 GB)

Go to huggingface.co/InstantX/FLUX.1-dev-Controlnet-Canny → Files and versions → download diffusion_pytorch_model.safetensors.

Rename the file after download

Rename the file to flux-canny-instantx.safetensors in Finder before placing it. In Finder: click once, press Return, type the new name, press Return again.

Place the renamed file in:

Restart ComfyUI and verify all models are recognized

Restart ComfyUI from Stability Matrix. Open the web interface at 127.0.0.1:8188 and create a new workflow. Add these four nodes and check their dropdowns:

① Load Diffusion Model → flux1-dev.safetensors

② DualCLIPLoader → t5xxl_fp16.safetensors + clip_l.safetensors

③ Load VAE → ae.safetensors

④ Load ControlNet Model → flux-canny-instantx.safetensors

All four must appear in the dropdowns before proceeding.

Switch to Flux in the RAY-L panel

In Blender, open the RAY-L panel in Render Properties. At the top you will see two buttons: SDXL and Flux.1 dev. Click Flux.1 dev to switch.

The panel adapts automatically — the negative prompt field disappears (Flux does not use one), CFG Scale is replaced by Guidance, and the ControlNet settings show the Flux model name.

Recommended starting settings for Flux:

Prompting for Flux

Flux understands natural language — write full sentences, not keyword lists. No negative prompt is needed.

Unlike SDXL, you do not need RAW photo, 8k uhd, or camera model keywords. Flux generates photorealistic results from descriptive text alone.